Manually provision on-premises nodes

Use the following procedure to manually provision nodes for your on-premises provider configuration:

- Your SSH user has sudo privileges that require a password - Assisted manual.

- Your SSH user does not have sudo privileges at all - Fully manual.

If the SSH user configured in the on-premises provider does not have sudo privileges, then you must set up each of the database nodes manually using the following procedure.

Note that you need access to a user with sudo privileges in order to complete these steps.

For each node, perform the following:

- Set up time synchronization

- Open incoming TCP ports

- Manually pre-provision the node

- Install Prometheus node exporter

- Install backup utilities

- Set crontab permissions

- Install systemd-related database service unit files (optional)

- Install the node agent

After you have provisioned the nodes, you can proceed to Add instances to the on-prem provider.

Set up time synchronization

A local Network Time Protocol (NTP) server or equivalent must be available.

Ensure an NTP-compatible time service client is installed in the node OS (chrony is installed by default in the standard AlmaLinux 8 instance used in this example). Then, configure the time service client to use the available time server. The procedure includes this step and assumes chrony is the installed client.

Open incoming TCP/IP ports

Database servers need incoming TCP/IP access enabled to the following ports, for communications between themselves and YugabyteDB Anywhere:

| Protocol | Port | Description |

|---|---|---|

| TCP | 22 | SSH (for automatic administration) |

| TCP | 5433 | YSQL client |

| TCP | 6379 | YEDIS client |

| TCP | 7000 | YB master webserver |

| TCP | 7100 | YB master RPC |

| TCP | 9000 | YB tablet server webserver |

| TCP | 9042 | YCQL client |

| TCP | 9090 | Prometheus server |

| TCP | 9100 | YB tablet server RPC |

| TCP | 9300 | Prometheus node exporter |

| TCP | 12000 | YCQL HTTP (for DB statistics gathering) |

| TCP | 13000 | YSQL HTTP (for DB statistics gathering) |

| TCP | 18018 | YB Controller |

The preceding table is based on the information on the default ports page.

Pre-provision nodes manually

This process carries out all provisioning tasks on the database nodes which require elevated privileges. After the database nodes have been prepared in this way, the universe creation process from YugabyteDB Anywhere will connect with the nodes only via the yugabyte user, and not require any elevation of privileges to deploy and operate the YugabyteDB universe.

Physical nodes (or cloud instances) are installed with a standard AlmaLinux 8 server image. The following steps are to be performed on each physical node, prior to universe creation:

-

Log in to each database node as a user with sudo enabled (for example, the

ec2-useruser in AWS). -

Add the following line to the

/etc/chrony.conffile:server <your-time-server-IP-address> prefer iburstThen run the following command:

sudo chronyc makestep # (force instant sync to NTP server) -

Add a new

yugabyte:yugabyteuser and group with the default login shell/bin/bashthat you set via the-sflag, as follows:sudo useradd -s /bin/bash --create-home --home-dir <yugabyte_home> yugabyte # (add user yugabyte and create its home directory as specified in <yugabyte_home>) sudo passwd yugabyte # (add a password to the yugabyte user) sudo su - yugabyte # (change to yugabyte user for execution of next steps)yugabyte_homeis the path to the Yugabyte home directory. If you set a custom path for the yugabyte user's home in the YugabyteDB Anywhere UI, you must use the same path here. Otherwise, you can omit the--home-dirflag.Ensure that the

yugabyteuser has permissions to SSH into the YugabyteDB nodes (as defined in/etc/ssh/sshd_config). -

If the node is running SELinux and the home directory is not the default, set the correct SELinux ssh context, as follows:

chcon -R -t ssh_home_t <yugabyte_home> -

Copy the SSH public key to each DB node. This public key should correspond to the private key entered into the YugabyteDB Anywhere provider.

-

Run the following commands as the

yugabyteuser, after copying the SSH public key file to the user home directory:cd ~yugabyte mkdir .ssh chmod 700 .ssh cat <pubkey_file> >> .ssh/authorized_keys chmod 400 .ssh/authorized_keys exit # (exit from the yugabyte user back to previous user) -

Add the following lines to the

/etc/security/limits.conffile (sudo is required):* - core unlimited * - data unlimited * - fsize unlimited * - sigpending 119934 * - memlock 64 * - rss unlimited * - nofile 1048576 * - msgqueue 819200 * - stack 8192 * - cpu unlimited * - nproc 12000 * - locks unlimited -

Modify the following line in the

/etc/security/limits.d/20-nproc.conffile:* soft nproc 12000 -

Install the rsync and OpenSSL packages (if not already included with your Linux distribution) using the following commands:

sudo dnf install openssl sudo dnf install rsyncFor airgapped environments, make sure your DNF repository mirror contains these packages.

-

If running on a virtual machine, execute the following to tune kernel settings:

-

Configure the parameter

vm.swappinessas follows:sudo bash -c 'sysctl vm.swappiness=0 >> /etc/sysctl.conf' sudo sysctl kernel.core_pattern=/home/yugabyte/cores/core_%p_%t_%E -

Configure the parameter

vm.max_map_countas follows:sudo sysctl -w vm.max_map_count=262144 sudo bash -c 'sysctl vm.max_map_count=262144 >> /etc/sysctl.conf' -

Validate the change as follows:

sysctl vm.max_map_count

-

-

Perform the following to prepare and mount the data volume (separate partition for database data):

-

List the available storage volumes, as follows:

lsblk -

Perform the following steps for each available volume (all listed volumes other than the root volume):

sudo mkdir /data # (or /data1, /data2 etc) sudo mkfs -t xfs /dev/nvme1n1 # (create xfs filesystem over entire volume) sudo vi /etc/fstab -

Add the following line to

/etc/fstab:/dev/nvme1n1 /data xfs noatime 0 0 -

Exit from vi, and continue, as follows:

sudo mount -av # (mounts the new volume using the fstab entry, to validate) sudo chown yugabyte:yugabyte /data sudo chmod 755 /data

-

Install Prometheus node exporter

Download the 1.3.1 version of the Prometheus node exporter, as follows:

wget https://github.com/prometheus/node_exporter/releases/download/v1.7.0/node_exporter-1.7.0.linux-amd64.tar.gz

If you are doing an airgapped installation, download the node exporter using a computer connected to the internet and copy it over to the database nodes.

On each node, perform the following as a user with sudo access:

-

Copy the

node_exporter-1.7.0.linux-amd64.tar.gzpackage file that you downloaded into the/tmpdirectory on each of the YugabyteDB nodes. Ensure that this file is readable by the user (for example,ec2-user). -

Run the following commands:

sudo mkdir /opt/prometheus sudo mkdir /etc/prometheus sudo mkdir /var/log/prometheus sudo mkdir /var/run/prometheus sudo mkdir -p /tmp/yugabyte/metrics sudo mv /tmp/node_exporter-1.7.0.linux-amd64.tar.gz /opt/prometheus sudo adduser --shell /bin/bash prometheus # (also adds group "prometheus") sudo chown -R prometheus:prometheus /opt/prometheus sudo chown -R prometheus:prometheus /etc/prometheus sudo chown -R prometheus:prometheus /var/log/prometheus sudo chown -R prometheus:prometheus /var/run/prometheus sudo chown -R yugabyte:yugabyte /tmp/yugabyte/metrics sudo chmod -R 755 /tmp/yugabyte/metrics sudo chmod +r /opt/prometheus/node_exporter-1.7.0.linux-amd64.tar.gz sudo su - prometheus (user session is now as user "prometheus") -

Run the following commands as user

prometheus:cd /opt/prometheus tar zxf node_exporter-1.7.0.linux-amd64.tar.gz exit # (exit from prometheus user back to previous user) -

Edit the following file:

sudo vi /etc/systemd/system/node_exporter.serviceAdd the following to the

/etc/systemd/system/node_exporter.servicefile:[Unit] Description=node_exporter - Exporter for machine metrics. Documentation=https://github.com/William-Yeh/ansible-prometheus After=network.target [Install] WantedBy=multi-user.target [Service] Type=simple User=prometheus Group=prometheus ExecStart=/opt/prometheus/node_exporter-1.7.0.linux-amd64/node_exporter --web.listen-address=:9300 --collector.textfile.directory=/tmp/yugabyte/metrics -

Exit from vi, and continue, as follows:

sudo systemctl daemon-reload sudo systemctl enable node_exporter sudo systemctl start node_exporter -

Check the status of the

node_exporterservice with the following command:sudo systemctl status node_exporter

Install backup utilities

YugabyteDB Anywhere supports backing up YugabyteDB to Amazon S3, Azure Storage, Google Cloud Storage, and Network File System (NFS). For more information, see Configure backup storage.

You can install the backup utility for the backup storage you plan to use, as follows:

-

NFS: Install rsync, which YugabyteDB Anywhere uses to perform NFS backups installed during one of the previous steps.

-

Amazon S3: Install s3cmd, on which YugabyteDB Anywhere relies to support copying backups to Amazon S3. You have the following installation options:

-

For a regular installation, execute the following:

sudo dnf install s3cmd -

For an airgapped installation, copy

/opt/third-party/s3cmd-2.0.1.tar.gzfrom the YugabyteDB Anywhere node to the database node, and then extract it into the/usr/localdirectory on the database node, as follows:cd /usr/local sudo tar xvfz path-to-s3cmd-2.0.1.tar.gz sudo ln -s /usr/local/s3cmd-2.0.1/s3cmd /usr/local/bin/s3cmd

-

-

Azure Storage: Install azcopy using one of the following options:

-

Download

azcopy_linux_amd64_10.13.0.tar.gzusing the following command:wget https://azcopyvnext.azureedge.net/release20211027/azcopy_linux_amd64_10.13.0.tar.gz -

For airgapped installations, copy

/opt/third-party/azcopy_linux_amd64_10.13.0.tar.gzfrom the YugabyteDB Anywhere node, as follows:cd /usr/local sudo tar xfz path-to-azcopy_linux_amd64_10.13.0.tar.gz -C /usr/local/bin azcopy_linux_amd64_10.13.0/azcopy --strip-components 1

-

-

Google Cloud Storage: Install gsutil using one of the following options:

-

Download

gsutil_4.60.tar.gzusing the following command:wget https://storage.googleapis.com/pub/gsutil_4.60.tar.gz -

For airgapped installations, copy

/opt/third-party/gsutil_4.60.tar.gzfrom the YugabyteDB Anywhere node, as follows:cd /usr/local sudo tar xvfz gsutil_4.60.tar.gz sudo ln -s /usr/local/gsutil/gsutil /usr/local/bin/gsutil

-

Set crontab permissions

YugabyteDB Anywhere supports performing YugabyteDB liveness checks, log file management, and core file management using cron jobs.

Note that sudo is required to set up this service.

If YugabyteDB Anywhere will be using cron jobs, ensure that the yugabyte user is allowed to run crontab:

- If you are using the

cron.allowfile to manage crontab access, add theyugabyteuser to this file. - If you are using the

cron.denyfile, remove theyugabyteuser from this file.

If you are not using either file, no changes are required.

Install systemd-related database service unit files

As an alternative to setting crontab permissions, you can install systemd-specific database service unit files, as follows:

-

Enable the

yugabyteuser to run the following commands as sudo or root:yugabyte ALL=(ALL:ALL) NOPASSWD: \ /bin/systemctl start yb-master, \ /bin/systemctl stop yb-master, \ /bin/systemctl restart yb-master, \ /bin/systemctl enable yb-master, \ /bin/systemctl disable yb-master, \ /bin/systemctl start yb-tserver, \ /bin/systemctl stop yb-tserver, \ /bin/systemctl restart yb-tserver, \ /bin/systemctl enable yb-tserver, \ /bin/systemctl disable yb-tserver, \ /bin/systemctl start yb-controller, \ /bin/systemctl stop yb-controller, \ /bin/systemctl restart yb-controller, \ /bin/systemctl enable yb-controller, \ /bin/systemctl disable yb-controller, \ /bin/systemctl start yb-bind_check.service, \ /bin/systemctl stop yb-bind_check.service, \ /bin/systemctl restart yb-bind_check.service, \ /bin/systemctl enable yb-bind_check.service, \ /bin/systemctl disable yb-bind_check.service, \ /bin/systemctl start yb-zip_purge_yb_logs.timer, \ /bin/systemctl stop yb-zip_purge_yb_logs.timer, \ /bin/systemctl restart yb-zip_purge_yb_logs.timer, \ /bin/systemctl enable yb-zip_purge_yb_logs.timer, \ /bin/systemctl disable yb-zip_purge_yb_logs.timer, \ /bin/systemctl start yb-clean_cores.timer, \ /bin/systemctl stop yb-clean_cores.timer, \ /bin/systemctl restart yb-clean_cores.timer, \ /bin/systemctl enable yb-clean_cores.timer, \ /bin/systemctl disable yb-clean_cores.timer, \ /bin/systemctl start yb-collect_metrics.timer, \ /bin/systemctl stop yb-collect_metrics.timer, \ /bin/systemctl restart yb-collect_metrics.timer, \ /bin/systemctl enable yb-collect_metrics.timer, \ /bin/systemctl disable yb-collect_metrics.timer, \ /bin/systemctl start yb-zip_purge_yb_logs, \ /bin/systemctl stop yb-zip_purge_yb_logs, \ /bin/systemctl restart yb-zip_purge_yb_logs, \ /bin/systemctl enable yb-zip_purge_yb_logs, \ /bin/systemctl disable yb-zip_purge_yb_logs, \ /bin/systemctl start yb-clean_cores, \ /bin/systemctl stop yb-clean_cores, \ /bin/systemctl restart yb-clean_cores, \ /bin/systemctl enable yb-clean_cores, \ /bin/systemctl disable yb-clean_cores, \ /bin/systemctl start yb-collect_metrics, \ /bin/systemctl stop yb-collect_metrics, \ /bin/systemctl restart yb-collect_metrics, \ /bin/systemctl enable yb-collect_metrics, \ /bin/systemctl disable yb-collect_metrics, \ /bin/systemctl daemon-reload -

Ensure that you have root access and add the following service and timer files to the

/etc/systemd/systemdirectory (set their ownerships to theyugabyteuser and 0644 permissions):yb-master.service[Unit] Description=Yugabyte master service Requires=network-online.target After=network.target network-online.target multi-user.target StartLimitInterval=100 StartLimitBurst=10 [Path] PathExists=/home/yugabyte/master/bin/yb-master PathExists=/home/yugabyte/master/conf/server.conf [Service] User=yugabyte Group=yugabyte # Start ExecStart=/home/yugabyte/master/bin/yb-master --flagfile /home/yugabyte/master/conf/server.conf Restart=on-failure RestartSec=5 # Stop -> SIGTERM - 10s - SIGKILL (if not stopped) [matches existing cron behavior] KillMode=process TimeoutStopFailureMode=terminate KillSignal=SIGTERM TimeoutStopSec=10 FinalKillSignal=SIGKILL # Logs StandardOutput=syslog StandardError=syslog # ulimit LimitCORE=infinity LimitNOFILE=1048576 LimitNPROC=12000 [Install] WantedBy=default.targetyb-tserver.service[Unit] Description=Yugabyte tserver service Requires=network-online.target After=network.target network-online.target multi-user.target StartLimitInterval=100 StartLimitBurst=10 [Path] PathExists=/home/yugabyte/tserver/bin/yb-tserver PathExists=/home/yugabyte/tserver/conf/server.conf [Service] User=yugabyte Group=yugabyte # Start ExecStart=/home/yugabyte/tserver/bin/yb-tserver --flagfile /home/yugabyte/tserver/conf/server.conf Restart=on-failure RestartSec=5 # Stop -> SIGTERM - 10s - SIGKILL (if not stopped) [matches existing cron behavior] KillMode=process TimeoutStopFailureMode=terminate KillSignal=SIGTERM TimeoutStopSec=10 FinalKillSignal=SIGKILL # Logs StandardOutput=syslog StandardError=syslog # ulimit LimitCORE=infinity LimitNOFILE=1048576 LimitNPROC=12000 [Install] WantedBy=default.targetyb-zip_purge_yb_logs.service[Unit] Description=Yugabyte logs Wants=yb-zip_purge_yb_logs.timer [Service] User=yugabyte Group=yugabyte Type=oneshot WorkingDirectory=/home/yugabyte/bin ExecStart=/bin/sh /home/yugabyte/bin/zip_purge_yb_logs.sh [Install] WantedBy=multi-user.targetyb-zip_purge_yb_logs.timer[Unit] Description=Yugabyte logs Requires=yb-zip_purge_yb_logs.service [Timer] User=yugabyte Group=yugabyte Unit=yb-zip_purge_yb_logs.service # Run hourly at minute 0 (beginning) of every hour OnCalendar=00/1:00 [Install] WantedBy=timers.targetyb-clean_cores.service[Unit] Description=Yugabyte clean cores Wants=yb-clean_cores.timer [Service] User=yugabyte Group=yugabyte Type=oneshot WorkingDirectory=/home/yugabyte/bin ExecStart=/bin/sh /home/yugabyte/bin/clean_cores.sh [Install] WantedBy=multi-user.targetyb-controller.service[Unit] Description=Yugabyte Controller Requires=network-online.target After=network.target network-online.target multi-user.target StartLimitInterval=100 StartLimitBurst=10 [Path] PathExists=/home/yugabyte/controller/bin/yb-controller-server PathExists=/home/yugabyte/controller/conf/server.conf [Service] User=yugabyte Group=yugabyte # Start ExecStart=/home/yugabyte/controller/bin/yb-controller-server \ --flagfile /home/yugabyte/controller/conf/server.conf Restart=always RestartSec=5 # Stop -> SIGTERM - 10s - SIGKILL (if not stopped) [matches existing cron behavior] KillMode=control-group TimeoutStopFailureMode=terminate KillSignal=SIGTERM TimeoutStopSec=10 FinalKillSignal=SIGKILL # Logs StandardOutput=syslog StandardError=syslog # ulimit LimitCORE=infinity LimitNOFILE=1048576 LimitNPROC=12000 [Install] WantedBy=default.targetyb-clean_cores.timer[Unit] Description=Yugabyte clean cores Requires=yb-clean_cores.service [Timer] User=yugabyte Group=yugabyte Unit=yb-clean_cores.service # Run every 10 minutes offset by 5 (5, 15, 25...) OnCalendar=*:0/10:30 [Install] WantedBy=timers.targetyb-collect_metrics.service[Unit] Description=Yugabyte collect metrics Wants=yb-collect_metrics.timer [Service] User=yugabyte Group=yugabyte Type=oneshot WorkingDirectory=/home/yugabyte/bin ExecStart=/bin/bash /home/yugabyte/bin/collect_metrics_wrapper.sh [Install] WantedBy=multi-user.targetyb-collect_metrics.timer[Unit] Description=Yugabyte collect metrics Requires=yb-collect_metrics.service [Timer] User=yugabyte Group=yugabyte Unit=yb-collect_metrics.service # Run every 1 minute OnCalendar=*:0/1:0 [Install] WantedBy=timers.targetyb-bind_check.service[Unit] Description=Yugabyte IP bind check Requires=network-online.target After=network.target network-online.target multi-user.target Before=yb-controller.service yb-tserver.service yb-master.service yb-collect_metrics.timer StartLimitInterval=100 StartLimitBurst=10 [Path] PathExists=/home/yugabyte/controller/bin/yb-controller-server PathExists=/home/yugabyte/controller/conf/server.conf [Service] # Start ExecStart=/home/yugabyte/controller/bin/yb-controller-server \ --flagfile /home/yugabyte/controller/conf/server.conf \ --only_bind --logtostderr Type=oneshot KillMode=control-group KillSignal=SIGTERM TimeoutStopSec=10 # Logs StandardOutput=syslog StandardError=syslog [Install] WantedBy=default.target

Install node agent

The node agent is used to manage communication between YugabyteDB Anywhere and the node. YugabyteDB Anywhere uses node agents to communicate with the nodes. When node agent is installed, YugabyteDB Anywhere no longer requires SSH or sudo access to nodes.

For automated and assisted manual provisioning, node agents are installed onto instances automatically when adding instances, or when running the pre-provisioning script using the --install_node_agent flag.

Use the following procedure to install node agent for fully manual provisioning.

Re-provisioning a node

If you are re-provisioning a node (that is, node agent has previously been installed on the node), you need to unregister the node agent before installing node agent again. Refer to Unregister node agent.To install the YugabyteDB node agent manually, as the yugabyte user, do the following:

-

Download the installer from YugabyteDB Anywhere using the API token of the Super Admin, as follows:

curl https://<yugabytedb_anywhere_address>/api/v1/node_agents/download --fail --header 'X-AUTH-YW-API-TOKEN: <api_token>' > installer.sh && chmod +x installer.shTo create an API token, navigate to your User Profile and click Generate Key.

-

Verify that the installer file contains the script.

-

Run the following command to download the node agent's

.tgzfile which installs and starts the interactive configuration:./installer.sh -c install -u https://<yba_address>:9000 -t <api_token>For example, if you run the following:

./installer.sh -c install -u http://10.98.0.42:9000 -t 301fc382-cf06-4a1b-b5ef-0c8c45273aefYou should get output similar to the following:

* Starting YB Node Agent install * Creating Node Agent Directory * Changing directory to node agent * Creating Sub Directories * Downloading YB Node Agent build package * Getting Linux/amd64 package * Downloaded Version - 2.17.1.0-PRE_RELEASE * Extracting the build package * The current value of Node IP is not set; Enter new value or enter to skip: 10.9.198.2 * The current value of Node Name is not set; Enter new value or enter to skip: Test * Select your Onprem Provider 1. Provider ID: 41ac964d-1db2-413e-a517-2a8d840ff5cd, Provider Name: onprem Enter the option number: 1 * Select your Instance Type 1. Instance Code: c5.large Enter the option number: 1 * Select your Region 1. Region ID: dc0298f6-21bf-4f90-b061-9c81ed30f79f, Region Code: us-west-2 Enter the option number: 1 * Select your Zone 1. Zone ID: 99c66b32-deb4-49be-85f9-c3ef3a6e04bc, Zone Name: us-west-2c Enter the option number: 1 • Completed Node Agent Configuration • Node Agent Registration Successful You can install a systemd service on linux machines by running sudo node-agent-installer.sh -c install_service --user yugabyte (Requires sudo access). -

Run the

install_servicecommand as a sudo user:sudo node-agent-installer.sh -c install_service --user yugabyteThis installs node agent as a systemd service. This is required so that the node agent can perform self-upgrade, database installation and configuration, and other functions.

When the installation has been completed, the configurations are saved in the config.yml file located in the node-agent/config/ directory. You should refrain from manually changing values in this file.

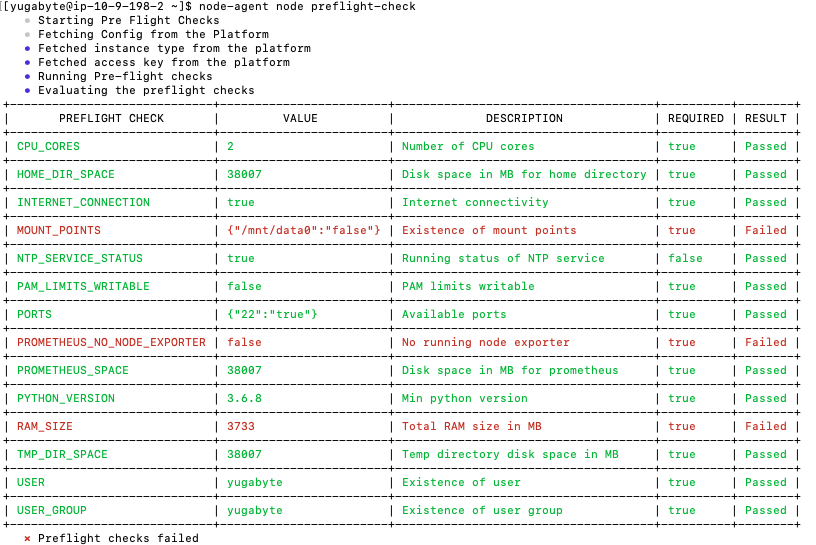

Preflight check

After the node agent is installed, configured, and connected to YugabyteDB Anywhere, you can perform a series of preflight checks without sudo privileges by using the following command:

node-agent node preflight-check

The result of the check is forwarded to YugabyteDB Anywhere for validation. The validated information is posted in a tabular form on the terminal. If there is a failure against a required check, you can apply a fix and then rerun the preflight check.

Expect an output similar to the following:

If the preflight check is successful, you add the node to the provider (if required) by executing the following:

node-agent node preflight-check --add_node

Reconfigure a node agent

If you want to use a node that has already been provisioned in a different provider, you can reconfigure the node agent.

To reconfigure a node for use in a different provider, do the following:

-

Remove the node instance from the provider using the following command:

node-agent node delete-instance -

Run the

configurecommand to start the interactive configuration. This also registers the node agent with YBA.node-agent node configure -t <api_token> -u https://<yba_address>:9000For example, if you run the following:

node-agent node configure -t 1ba391bc-b522-4c18-813e-71a0e76b060a -u http://10.98.0.42:9000* The current value of Node Name is set to node1; Enter new value or enter to skip: * The current value of Node IP is set to 10.9.82.61; Enter new value or enter to skip: * Select your Onprem Manually Provisioned Provider. 1. Provider ID: b56d9395-1dda-47ae-864b-7df182d07fa7, Provider Name: onprem-provision-test1 * The current value is Provider ID: b56d9395-1dda-47ae-864b-7df182d07fa7, Provider Name: onprem-provision-test1. Enter new option number or enter to skip: * Select your Instance Type. 1. Instance Code: c5.large * The current value is Instance Code: c5.large. Enter new option number or enter to skip: * Select your Region. 1. Region ID: 0a185358-3de0-41f2-b106-149be3bf07dd, Region Code: us-west-2 * The current value is Region ID: 0a185358-3de0-41f2-b106-149be3bf07dd, Region Code: us-west-2. Enter new option number or enter to skip: * Select your Zone. 1. Zone ID: c9904f64-a65b-41d3-9afb-a7249b2715d1, Zone Code: us-west-2a * The current value is Zone ID: c9904f64-a65b-41d3-9afb-a7249b2715d1, Zone Code: us-west-2a. Enter new option number or enter to skip: • Completed Node Agent Configuration • Node Agent Registration Successful

If you are running v2.18.5 or earlier, the node must be unregistered first. Use the following procedure:

-

If the node instance has been added to a provider, remove the node instance from the provider.

-

Stop the systemd service as a sudo user.

sudo systemctl stop yb-node-agent -

Run the

configurecommand to start the interactive configuration. This also registers the node agent with YBA.node-agent node configure -t <api_token> -u https://<yba_address>:9000 -

Start the Systemd service as a sudo user.

sudo systemctl start yb-node-agent -

Verify that the service is up.

sudo systemctl status yb-node-agent -

Run preflight checks and add the node as

yugabyteuser.node-agent node preflight-check --add_node

Unregister node agent

When performing some tasks, you may need to unregister the node agent from a node.

To unregister node agent, run the following command:

node-agent node unregister

After running this command, YBA no longer recognizes the node agent.

If the node agent configuration is corrupted, the command may fail. In this case, unregister the node agent using the API as follows:

-

Obtain the node agent ID:

curl -k --header 'X-AUTH-YW-API-TOKEN:<api_token>' https://<yba_address>/api/v1/customers/<customer_id>/node_agents?nodeIp=<node_agent_ip>You should see output similar to the following:

[{ "uuid":"ec7654b1-cf5c-4a3b-aee3-b5e240313ed2", "name":"node1", "ip":"10.9.82.61", "port":9070, "customerUuid":"f33e3c9b-75ab-4c30-80ad-cba85646ea39", "version":"2.18.6.0-PRE_RELEASE", "state":"READY", "updatedAt":"2023-12-19T23:56:43Z", "config":{ "certPath":"/opt/yugaware/node-agent/certs/f33e3c9b-75ab-4c30-80ad-cba85646ea39/ec7654b1-cf5c-4a3b-aee3-b5e240313ed2/0", "offloadable":false }, "osType":"LINUX", "archType":"AMD64", "home":"/home/yugabyte/node-agent", "versionMatched":true, "reachable":false }] -

Use the value of the field

uuidas<node_agent_id>in the following command:curl -k -X DELETE --header 'X-AUTH-YW-API-TOKEN:<api_token>' https://<yba_address>/api/v1/customers/<customer_id>/node_agents/<node_agent_id>

For more information, refer to Node agent FAQ.